You built an LLM application that works brilliantly in your demos. The product team is excited. Leadership wants it in production by next quarter. But then comes the question that stops every AI project cold: how do you know it actually works?

Traditional software testing gives you binary answers — the function returns the expected output or it doesn't. LLM evaluation is fundamentally different. Your model might give five different valid answers to the same question. It might be factually correct but miss the user's intent. It might work perfectly on your test cases and fail spectacularly on real user queries.

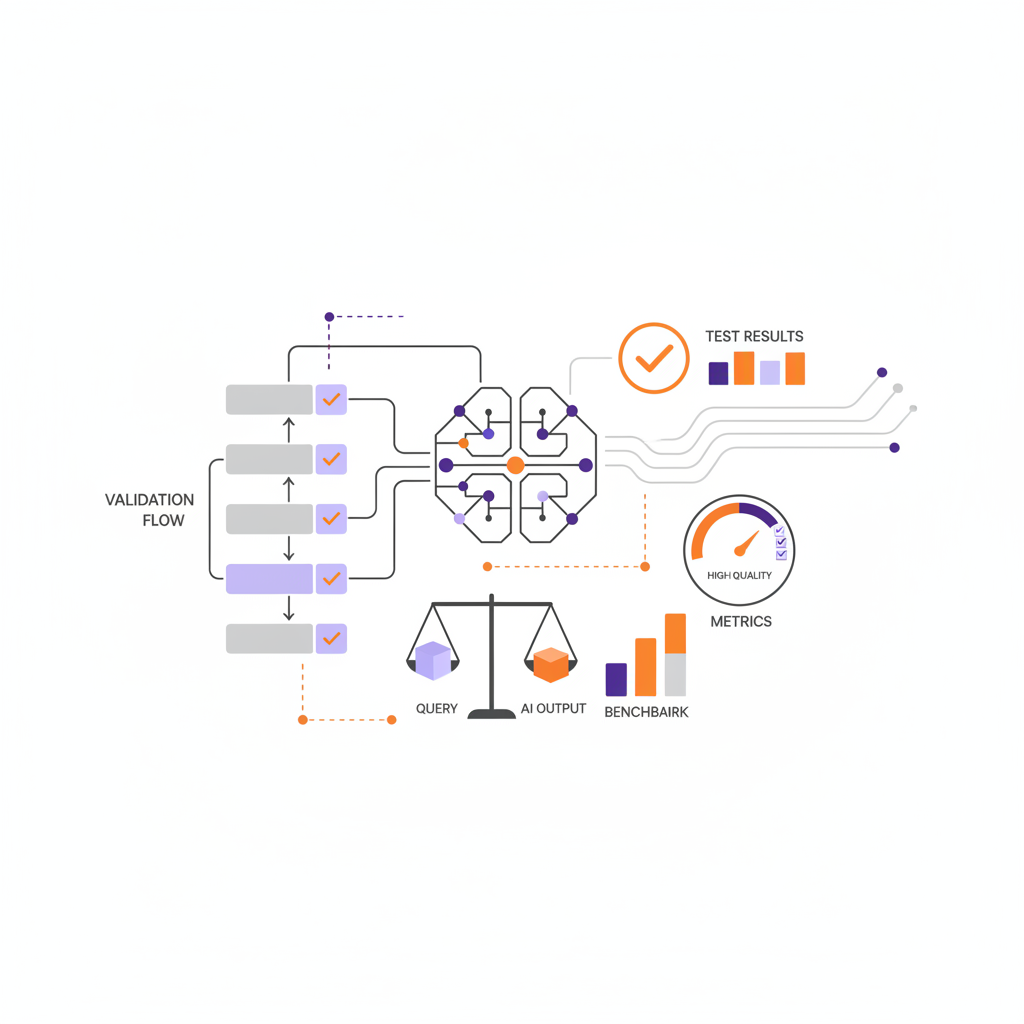

TL;DR: LLM evaluation requires different thinking than traditional software testing. You can't just check for exact matches — you need to measure factuality, relevance, coherence, safety, and task completion across diverse test cases. Build evaluation datasets from real user queries, use automated evaluation techniques (LLM-as-Judge, embedding similarity, traditional NLP metrics), integrate testing into CI/CD, and continuously monitor production quality. This guide covers the complete pipeline from dataset creation to production monitoring.

Why LLM Evaluation is Different

Software engineers approach LLM evaluation expecting it to work like unit testing. It doesn't. Understanding why helps you build better evaluation systems.

Non-Deterministic Outputs

Even with temperature set to zero, LLM outputs can vary between API calls due to infrastructure changes, model updates, or floating-point precision differences. The same prompt might generate "The capital of France is Paris" today and "Paris is the capital of France" tomorrow. Both are correct, but a naive string comparison fails.

Multiple Valid Answers

Ask an LLM to summarize a document and you'll get dozens of valid summaries. Ask it to write code for a function and there are infinite correct implementations. Your evaluation system must recognize that different doesn't mean wrong.

Evaluation Requires Judgment

Determining if an LLM response is "good" often requires human-level understanding. Is the answer factually correct? Does it address the user's actual intent? Is the tone appropriate? These judgments don't reduce to simple assertions.

Failures are Subtle

LLMs rarely crash — they fail gracefully by generating plausible-sounding nonsense. A hallucinated fact looks identical to a correct one. A subtly wrong code suggestion compiles and runs but produces incorrect results. Your evaluation must catch these silent failures.

Evaluation Dimensions

Production LLM systems need evaluation across multiple dimensions. A response can score well on factuality but fail on relevance, or be highly relevant but poorly structured.

| Dimension | What It Measures | How to Evaluate |

|---|---|---|

| Factuality | Are claims accurate and verifiable? | Compare against source documents, fact-checking, retrieval verification |

| Relevance | Does the response address the query? | Semantic similarity to ideal answer, LLM-as-Judge scoring |

| Coherence | Is the response well-structured and logical? | LLM-as-Judge, readability metrics, structure validation |

| Safety | Does it avoid harmful or inappropriate content? | Safety classifiers, toxicity detection, policy compliance checks |

| Task Completion | Did it accomplish what the user wanted? | Execution testing (for code), format validation, end-to-end checks |

| Groundedness | Are claims supported by provided context? | Citation verification, context overlap analysis |

| Latency | Response time acceptable for use case? | Direct measurement, percentile tracking |

| Cost | Token usage within budget constraints? | Token counting, cost tracking per query type |

Not every dimension matters equally for every application. A customer support bot prioritizes factuality and safety. A creative writing assistant weights coherence and relevance higher. Define which dimensions matter most for your use case before building evaluation infrastructure.

Building Evaluation Datasets

Your evaluation is only as good as your test cases. Most teams underinvest in dataset creation and pay for it with unreliable quality signals.

Collecting Real Queries

Start with actual user queries, not synthetic examples you invented. Production queries reveal edge cases, phrasing variations, and use patterns you would never anticipate. Sources include:

- Production logs — Sample from actual queries (anonymized if needed)

- Support tickets — Questions customers actually ask

- Search logs — What users search for in your product

- Failure cases — Queries that triggered complaints or escalations

Creating Golden Answers

Each test query needs a reference answer — the "golden" response against which you compare model outputs. Golden answers should be:

- Expert-reviewed — Written or validated by domain experts

- Comprehensive — Cover all key points the response should include

- Annotated — Include metadata about required facts, format expectations, citations needed

Evaluation Dataset Format

Structure your evaluation dataset for automated testing:

{

"test_cases": [

{

"id": "tc-001",

"query": "What are the refund policies for annual subscriptions?",

"context": "Retrieved documents would go here for RAG evaluation",

"golden_answer": "Annual subscriptions can be refunded within 30 days of purchase...",

"required_facts": [

"30-day refund window",

"Pro-rated refunds after 30 days",

"No refunds for used credits"

],

"evaluation_criteria": {

"must_mention": ["30 days", "refund"],

"must_not_mention": ["guaranteed", "unlimited"],

"expected_format": "paragraph",

"max_length": 200

},

"metadata": {

"category": "billing",

"difficulty": "easy",

"source": "production_logs"

}

}

]

}Dataset Maintenance

Evaluation datasets aren't static. Plan for ongoing maintenance:

- Regular updates — Add new test cases from production failures

- Golden answer refresh — Update when underlying information changes

- Balance monitoring — Ensure coverage across categories and difficulty levels

- Versioning — Track dataset changes alongside model changes

A healthy evaluation dataset grows continuously. Start with 50-100 high-quality test cases, then expand based on production learnings.

Automated Evaluation Techniques

Manual review doesn't scale. You need automated evaluation that runs on every change.

LLM-as-Judge

Use a capable LLM to evaluate outputs from your production model. This approach captures nuanced quality judgments that rules can't express.

from openai import OpenAI

client = OpenAI()

def evaluate_response(query: str, response: str, golden_answer: str) -> dict:

"""Use GPT-4 to evaluate response quality."""

evaluation_prompt = f"""You are evaluating an AI assistant's response.

Query: {query}

AI Response: {response}

Reference Answer: {golden_answer}

Evaluate the response on these criteria (score 1-5):

1. Factuality: Are all claims accurate?

2. Relevance: Does it address the query?

3. Completeness: Does it cover key points from the reference?

4. Clarity: Is it well-written and easy to understand?

Respond in JSON format:

,

"relevance": ,

"completeness": ,

"clarity": ,

"overall_pass": true/false,

"issues": ["list of specific problems if any"]

}}"""

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": evaluation_prompt}],

response_format={"type": "json_object"}

)

return json.loads(response.choices[0].message.content)

# Run evaluation

result = evaluate_response(

query="What is your return policy?",

response=model_output,

golden_answer=test_case["golden_answer"]

)

assert result["overall_pass"], f"Evaluation failed: {result['issues']}"Embedding-Based Metrics

Measure semantic similarity between model output and reference answers using embeddings. This catches responses that say the same thing differently.

- Cosine similarity — Compare embedding vectors of response and golden answer

- BERTScore — Token-level similarity using contextual embeddings

- Semantic textual similarity — Purpose-built models for comparing text meaning

Embedding metrics work well for factual Q&A where the "right answer" exists. They struggle with creative or open-ended tasks.

Traditional NLP Metrics

Classic metrics still have their place:

- BLEU/ROUGE — N-gram overlap, useful for summarization and translation

- Exact match — For structured outputs like entity extraction

- Regex validation — Check format compliance (JSON, email, dates)

- Keyword presence — Verify required terms appear in response

Specialized Evaluators

Some evaluation tasks need purpose-built tools:

- Code execution — Run generated code and check outputs

- SQL validation — Execute queries and compare results

- Math verification — Compute expected answers and compare

- Citation checking — Verify claims trace back to sources (critical for RAG systems)

Evaluation Frameworks Comparison

Several open-source frameworks help structure LLM evaluation. Each has different strengths.

| Framework | Best For | Key Features | Limitations |

|---|---|---|---|

| deepeval | Unit testing LLMs | Pytest integration, built-in metrics, CI/CD friendly | Smaller community, less customizable |

| ragas | RAG evaluation | RAG-specific metrics (context relevance, faithfulness), easy setup | Focused on RAG, less general-purpose |

| promptfoo | Prompt engineering | YAML config, side-by-side comparisons, model agnostic | Less Python-native, more CLI-focused |

| LangSmith | LangChain users | Deep LangChain integration, tracing, dataset management | LangChain ecosystem lock-in |

| Weights & Biases | ML teams | Experiment tracking, visualization, team collaboration | More complex setup, enterprise pricing |

deepeval Example

deepeval integrates with pytest for familiar testing patterns:

from deepeval import assert_test

from deepeval.test_case import LLMTestCase

from deepeval.metrics import (

AnswerRelevancyMetric,

FaithfulnessMetric,

ContextualRelevancyMetric

)

def test_rag_response():

"""Test that RAG responses are relevant and grounded."""

test_case = LLMTestCase(

input="What is the refund policy for annual plans?",

actual_output=rag_pipeline.query("What is the refund policy for annual plans?"),

expected_output="Annual plans can be refunded within 30 days...",

retrieval_context=[

"Refund Policy: Annual subscriptions are eligible for full refund within 30 days of purchase.",

"After 30 days, refunds are prorated based on remaining subscription period."

]

)

# Define evaluation metrics

relevancy_metric = AnswerRelevancyMetric(threshold=0.7)

faithfulness_metric = FaithfulnessMetric(threshold=0.8)

context_metric = ContextualRelevancyMetric(threshold=0.7)

# Run assertions

assert_test(test_case, [relevancy_metric, faithfulness_metric, context_metric])

def test_no_hallucination():

"""Verify response doesn't contain claims unsupported by context."""

test_case = LLMTestCase(

input="What premium features are included?",

actual_output=model_response,

retrieval_context=retrieved_docs

)

# Faithfulness checks that claims are grounded in context

faithfulness = FaithfulnessMetric(threshold=0.9)

assert_test(test_case, [faithfulness])CI/CD Integration

Evaluation should run automatically on every change. Catching regressions before deployment is far cheaper than fixing them in production.

GitHub Actions Workflow

name: LLM Evaluation Pipeline

on:

push:

branches: [main, develop]

pull_request:

branches: [main]

env:

OPENAI_API_KEY: $

jobs:

evaluate:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Set up Python

uses: actions/setup-python@v5

with:

python-version: '3.11'

- name: Install dependencies

run: |

pip install -r requirements.txt

pip install deepeval pytest

- name: Run evaluation suite

run: |

deepeval test run tests/evaluation/

- name: Run regression tests

run: |

pytest tests/evaluation/ -v --tb=short

- name: Upload evaluation results

uses: actions/upload-artifact@v4

with:

name: evaluation-results

path: evaluation_results/

- name: Check quality gates

run: |

python scripts/check_quality_gates.py \

--min-relevancy 0.8 \

--min-faithfulness 0.85 \

--max-hallucination-rate 0.05

- name: Comment PR with results

if: github.event_name == 'pull_request'

uses: actions/github-script@v7

with:

script: |

const fs = require('fs');

const results = JSON.parse(fs.readFileSync('evaluation_results/summary.json'));

const body = `## LLM Evaluation Results

| Metric | Score | Threshold | Status |

|--------|-------|-----------|--------|

| Relevancy | ${results.relevancy.toFixed(2)} | 0.80 | ${results.relevancy >= 0.8 ? 'Pass' : 'Fail'} |

| Faithfulness | ${results.faithfulness.toFixed(2)} | 0.85 | ${results.faithfulness >= 0.85 ? 'Pass' : 'Fail'} |

| Hallucination Rate | ${results.hallucination_rate.toFixed(2)} | 0.05 | ${results.hallucination_rate <= 0.05 ? 'Pass' : 'Fail'} |

**Test Cases:** ${results.total_tests} | **Passed:** ${results.passed} | **Failed:** ${results.failed}

`;

github.rest.issues.createComment({

issue_number: context.issue.number,

owner: context.repo.owner,

repo: context.repo.repo,

body: body

});Quality Gates

Define clear thresholds that must pass before deployment:

- Minimum accuracy scores — Relevancy, faithfulness, factuality above thresholds

- Maximum regression — No more than X% degradation from baseline

- Zero critical failures — Safety violations, format errors, or complete failures block deployment

- Coverage requirements — Evaluation must cover all major query categories

Production Monitoring

Evaluation doesn't stop at deployment. Production traffic reveals failure modes that testing misses.

Online Evaluation

Run evaluation continuously on production traffic:

- Sample-based evaluation — Evaluate a random sample of queries automatically

- Shadow evaluation — Run candidate models alongside production and compare

- A/B testing — Compare model versions with real users

User Feedback Integration

Explicit and implicit user signals are invaluable:

- Thumbs up/down — Direct quality signal from users

- Regeneration requests — User asking for new response indicates failure

- Follow-up questions — May indicate incomplete or confusing responses

- Task completion — Did the user accomplish their goal?

Alerting on Quality Degradation

Set up alerts for quality drops:

- Rolling accuracy metrics — Track 24h rolling average, alert on significant drops

- Error rate spikes — Sudden increase in format errors or failed generations

- Latency degradation — P95 latency exceeding thresholds

- User satisfaction drops — Declining thumbs-up ratio or increasing complaints

Common Evaluation Mistakes

Teams new to LLM evaluation often fall into these traps.

Testing on Training Data

If your test cases overlap with data used to fine-tune or prompt-engineer the model, you're measuring memorization, not generalization. Keep strict separation between training and evaluation data.

Overfitting to Benchmarks

Optimizing for evaluation metrics can diverge from actual user satisfaction. A model that scores perfectly on your test suite but frustrates real users is a failure. Include user feedback in your evaluation loop.

Ignoring Edge Cases

Most evaluation datasets skew toward common, easy cases. Real production traffic includes adversarial inputs, unusual phrasing, out-of-scope queries, and combined intents. Deliberately include difficult cases in your test suite.

Static Evaluation Sets

A fixed evaluation dataset becomes stale as your product evolves and user behavior changes. Continuously refresh test cases from production data.

Single-Metric Focus

Optimizing for one metric often degrades others. A model that maximizes factuality by being extremely conservative will fail on completeness. Track multiple dimensions and understand their trade-offs.

Insufficient Test Volume

With 20 test cases, a single evaluation failure swings your accuracy by 5%. You need enough test cases for statistically meaningful results — typically 100+ for core functionality, 500+ for production readiness.

How Virtido Can Help You Build LLM Evaluation Pipelines

At Virtido, we help enterprises build production-grade AI systems, including the evaluation infrastructure that ensures they work reliably. Our teams combine ML engineering expertise with practical deployment experience.

What We Offer

- Evaluation pipeline design — Architect testing systems tailored to your LLM applications and use cases

- Dataset creation and curation — Build high-quality evaluation datasets from your production data

- CI/CD integration — Implement automated evaluation in your development workflow

- Production monitoring — Deploy real-time quality tracking and alerting systems

- AI/ML engineers on demand — Augment your team with vetted specialists in 2-4 weeks

We've built evaluation systems for LLM applications across financial services, healthcare, e-commerce, and enterprise software. Our staff augmentation model provides Swiss contracts and full IP protection.

Final Thoughts

LLM evaluation is the unsexy work that separates demos from production systems. Without rigorous evaluation, you're deploying AI on faith — hoping it works, unable to detect when it degrades, and surprised when users complain. Building proper evaluation infrastructure requires upfront investment, but it pays dividends in deployment confidence, faster iteration, and early detection of problems.

The key insight is that LLM evaluation requires a different mindset than traditional software testing. You're not checking for exact correctness — you're measuring quality across multiple dimensions, accepting that "correct" often means "good enough for the use case." This requires human judgment encoded into automated systems, continuous monitoring of production behavior, and honest acknowledgment of what your tests can and cannot catch.

Start simple: build a small evaluation dataset from real queries, implement LLM-as-Judge for core quality dimensions, integrate testing into your CI/CD pipeline, and monitor production feedback. Expand coverage as you learn where your system fails. The goal isn't perfect evaluation — it's sufficient confidence that your system works well enough to serve real users, and fast feedback when it stops working.

Continue Reading

Frequently Asked Questions

How many test cases do I need for LLM evaluation?

Start with 50-100 high-quality test cases covering your core use cases. For production readiness, aim for 500+ test cases across all query categories. The key is quality over quantity — 100 well-crafted test cases with accurate golden answers beat 1,000 poorly constructed ones. Expand continuously by sampling from production failures and edge cases.

Can I use GPT-4 to evaluate GPT-4 outputs?

Yes, and this approach (LLM-as-Judge) works well for many use cases. Research shows GPT-4 evaluations correlate strongly with human judgments for factuality and relevance. However, be aware of potential biases — the same model may be lenient toward its own style of responses. For critical applications, combine LLM-as-Judge with human review on a sample of cases and use a different model family for evaluation when possible.

What accuracy targets should I set for production LLM systems?

Targets depend on your use case and the cost of errors. Customer-facing applications typically require 85-95% relevance scores. Safety-critical applications (medical, legal, financial) need 95%+ factuality with near-zero tolerance for harmful outputs. Internal tools can often tolerate lower thresholds (75-85%). Start with baselines from your current system (even if manual) and set incremental improvement targets.

How do I test for hallucinations specifically?

Test hallucination through faithfulness evaluation: check whether claims in the response are supported by provided context or verifiable sources. For RAG systems, use metrics like RAGAS faithfulness score that compare generated statements against retrieved documents. Include test cases with questions the model shouldn't be able to answer — a good system says "I don't know" rather than inventing facts. Regularly audit production responses for factual accuracy.

How do I evaluate RAG systems differently from standard LLMs?

RAG evaluation adds retrieval quality to generation quality. Evaluate the retrieval component separately: are the right documents being retrieved? Then evaluate generation: is the response faithful to retrieved context? Key RAG-specific metrics include context relevance (are retrieved docs relevant to query?), context utilization (does the response use the context?), and groundedness (are claims traceable to sources?). See our RAG guide for architecture details.

How often should I re-evaluate my LLM system?

Run automated evaluation on every code change that touches the LLM pipeline. For production monitoring, sample and evaluate continuously — at minimum daily, ideally on a rolling basis throughout the day. Re-run full evaluation suites whenever you update prompts, switch models, retrain embeddings, or change retrieval logic. Also trigger evaluation when you observe quality issues in production metrics.

What do I do when human annotators disagree on whether a response is correct?

Annotator disagreement reveals ambiguity in your evaluation criteria or genuinely difficult cases. First, improve annotation guidelines to reduce ambiguity. Use multiple annotators per case and measure inter-annotator agreement (Krippendorff's alpha or Cohen's kappa). For cases with legitimate disagreement, consider accepting multiple valid answers or using majority voting. High-disagreement cases often make poor automated test cases — flag them for human review instead.

How do I handle non-determinism in LLM outputs during testing?

Set temperature to 0 for reproducibility, though this doesn't guarantee identical outputs across API calls. Run each test case multiple times (3-5) and evaluate statistical consistency rather than exact matches. Use semantic similarity and LLM-as-Judge evaluation rather than exact string comparison. For CI/CD, set pass thresholds that account for variance — require 4/5 runs to pass rather than perfect consistency. Track variance as a metric itself.

Should I evaluate prompts separately from models?

Yes, treat prompts as code that needs testing. When changing prompts, run evaluation against a fixed model to isolate prompt impact. When changing models, evaluate with fixed prompts. This separation helps you understand whether regressions come from prompt changes or model changes. Version control prompts alongside code and include them in your evaluation dataset metadata so you can reproduce historical evaluations.

What's the best way to evaluate multi-turn conversations?

Multi-turn evaluation is significantly harder than single-turn. Test conversation-level metrics: does the assistant maintain context? Does it handle reference resolution ("What about the second option?")? Does conversation quality degrade over turns? Create test scenarios that cover common multi-turn patterns (clarification, follow-up, topic switch). Evaluate both individual turn quality and overall conversation success. Track metrics like conversation completion rate and turns-to-resolution.